Taking control of polarization in social networks

Let's talk about a word we're all uncomfortable with: "doomscrolling". In the age of rapid dissemination of information on social media, unfortunately, this is something we all do in our free time. The next time you mindlessly scroll through Instagram or TikTok, I would like to encourage you all to think about how these apps keep you hooked.

Each social media platform has a built-in "recommender algorithm" that tracks your activity on that platform (for example: time spent on a particular video, like/dislike, comments etc.) and tries to gauge your interests. Based on this activity, the algorithm (learn what an algorithm is) spits out new posts that you are most likely to engage with again. This is what we call "supervised learning" in artificial intelligence (AI).

So the proven route to keeping users hooked is giving them more of what they react to: it’s as simple as that. No wonder Google and Meta make billions each year from social media. But there is a dark side to these algorithms, whose first casualty is the users, i.e., us.

Polarizing posts have glued you to your phones

Yes, and probably, you don't even realize it. Social media companies make the big bucks only when you're endlessly scrolling through your phone… which is less likely to happen if they promote nuanced posts. Unfortunately, this means that the algorithm overwhelmingly promotes extreme content to maintain high engagement. Leading up to the US elections in 2024, I was a witness to this myself, where I would notice posts on X (formerly Twitter) that were extremely in favour of either the Democrats or the Republicans, often demonizing the other party to the extent that it was disturbing to watch. As more users engage with these articles, thanks to their catchy and flaming headlines (often mixed with unverified fake news), these posts get more visibility, leading to more people being shown these articles.

But why do people even read these articles, let alone engage with them? We all exhibit "confirmation bias", the tendency to encourage thoughts and behaviour that conform to our existing beliefs. We all have our own opinions regarding whatever's happening every day, and the recommender algorithm is smart enough to exploit them for its own needs. As the recommender keeps showing us articles that reinforce our prior beliefs, it is likely that we get more convinced of our initial positions on a topic, leading to the formation of "bubbles" and "echo chambers". On a societal level, this leads to polarization, and one cannot overstate how dangerous this is.

The pressing problem is that modern recommender algorithms are, in fact, designed this way to maximize profits through visibility (although the billion-dollar companies claim otherwise), thus disrupting social harmony. An even more sinister aspect is that fake news is creeping into algorithms undetected, and algorithm designers may be pursuing their own goals.

With this problem in mind, we at the Automatic Control Laboratory and NCCR Automation were interested in finding out if there was a possibility to develop recommender algorithms that could keep engagement high while not polarizing societies, and the good news is that we may have found a solution!

Balancing engagement & polarization

Imagine you'd want to buy a laptop online. Normally, what we'd do is we'd ask around for opinions on that laptop from our friends and colleagues, after which we’d come to a decision. Similarly, whenever we read some interesting news online, we discuss them with our colleagues over lunch and then form an opinion on that topic. Thus, our network plays an important role in decision making, especially online.

Unfortunately, the recommenders used by the big social media companies that make use of AI don't take this network explicitly into consideration. Yes, the algorithms do track who you're connected with on social media and your activities, but don't make constructive use of them. Most algorithms give overwhelming priority to "personalisation" (i.e. how you engage with the platform) rather than "network awareness" (i.e. how you engage with others on the platform).

We had the intuition that since polarization of societies and the formation of bubbles was more of a network phenomenon (rather than something arising purely from personalization), we could explicitly use the network to reduce polarization while still using personalized algorithms to increase engagement. This is what we call a "network-aware" recommender system.

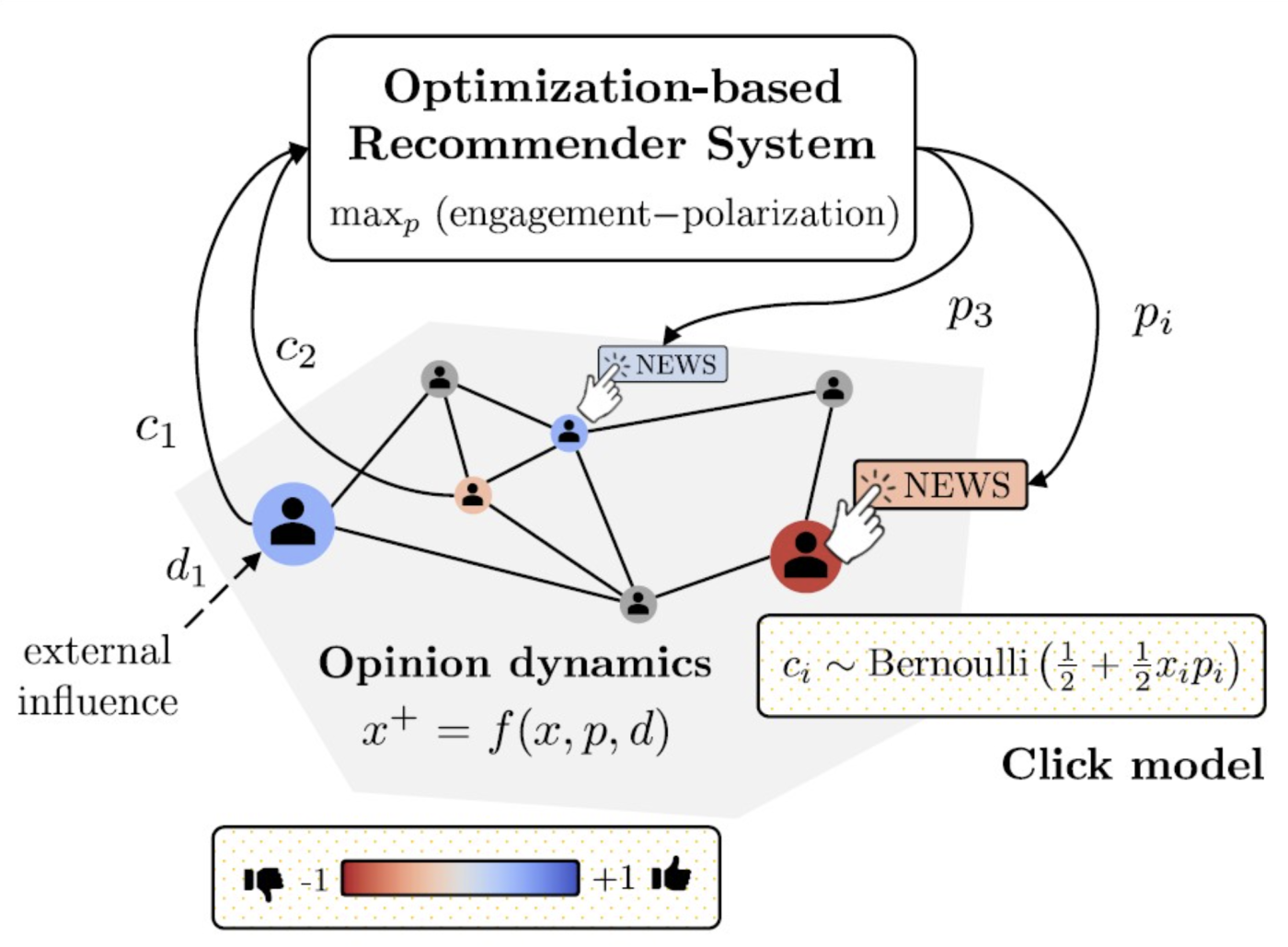

To do this particular task, we developed an optimization problem (learn more about what optimization is) that looked like this:

maximize objective function (engagement - polarization)

subject to constraints (opinion dynamics)

In a typical optimization problem, there is an objective function that is a “target” (in this context, maximizing engagement while minimizing polarization), along with constraints that may prevent you from getting the best solution. In this context, it is the temporal evolution of opinions in a closed loop with the recommended articles and the influence of other users.

What are the challenges in designing such an algorithm?

Any recommender can learn your preferences and optimize accordingly, using well-developed AI tools. Thus, the engagement-maximizing part is not too difficult to solve. However, minimizing polarization means that the recommender should know where you stand (for example, on a scale from -1 to +1, +1 if you lean strongly towards the Democrats, vs -1 if you're strongly in favour of the Republicans) and learn how well connected you are with your network. This is not trivial to obtain.

In a realistic setup, you are not going to explicitly tell X or Instagram that you prefer one side over the other, nor are they going to ask you. Such an explicit violation of privacy would cause outrage (although some algorithms have their own cheeky ways of finding out!). Neither are you going to tell them whose opinions you value the most in your network, nor whom you hate the most. In addition to this, the social media platform doesn't know your preferences when it comes to viewing articles; for example, you may be more inclined towards reading something from the Guardian as opposed to the Telegraph.

Thus, your real-time opinions, how you perceive your social network and how you view articles, i.e. your clicking behaviour, is unknown to the algorithm a priori.

Turns out a "network-aware" algorithm helps us immensely!

We use a combination of standard estimation algorithms in control theory and neural networks (read more about neural networks) to solve these problems and employ "Online feedback optimization" (OFO), a very convenient tool (also developed by NCCR Automation researchers!) to solve the identified optimization problem.

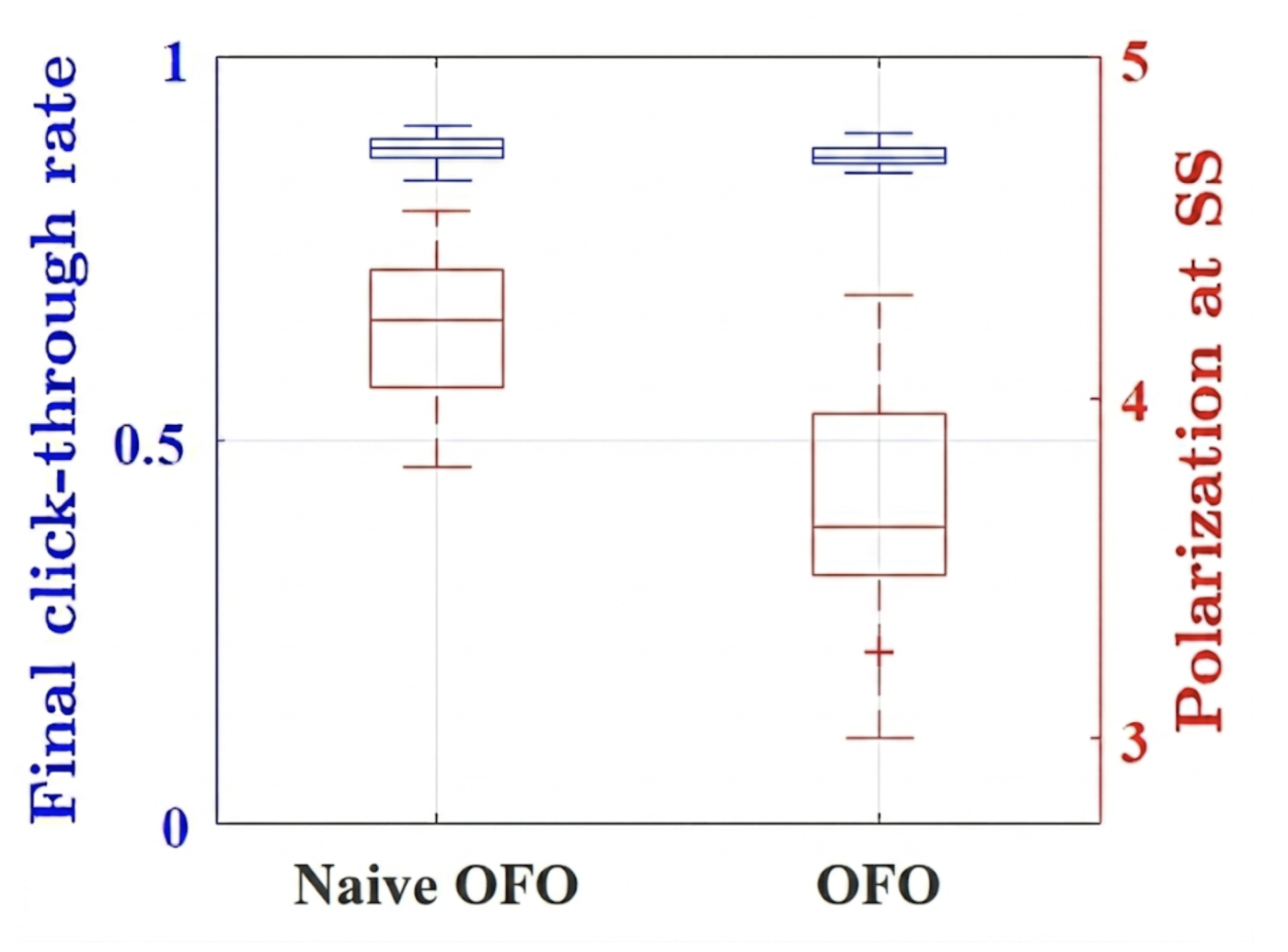

After running some simulations and comparing with a "naive" case (where personalization alone was the objective and the network was ignored), we were able to show that our algorithm worked much better in terms of maintaining high levels of engagement while reducing polarization in social networks (see Fig. 2).

Our empirical results essentially show that you do not need to sacrifice engagement on social media platforms in order to minimize polarization. We sincerely hope that in the future, the big platforms alter their algorithms in favour of "safe" designs that promote less radicalization and actively work towards the urgent goal of reducing polarization in social networks.

Readers can find a complete technical and rigorous explanation of our algorithm here.

*”Steady-state” in control theory is just a euphemism that represents the end of a simulation.